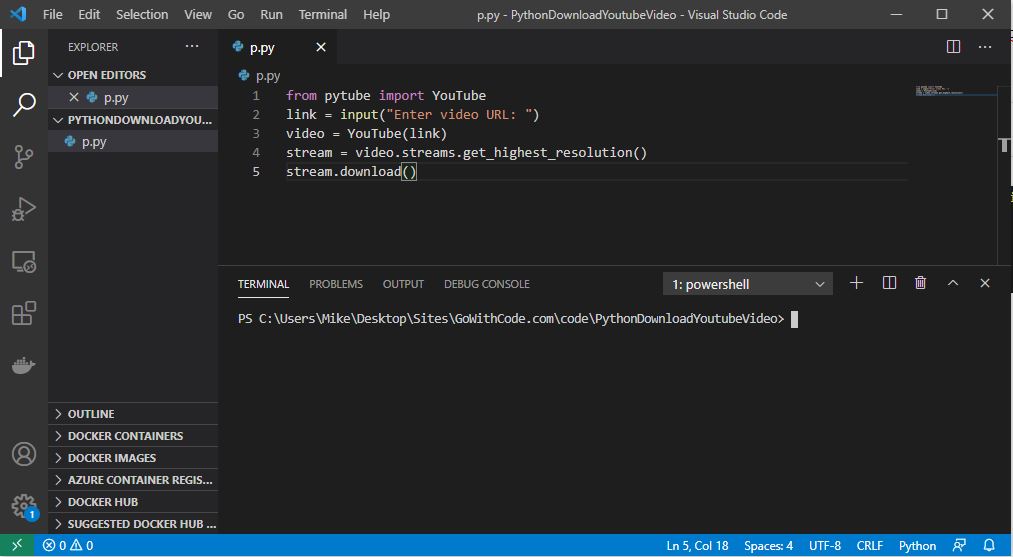

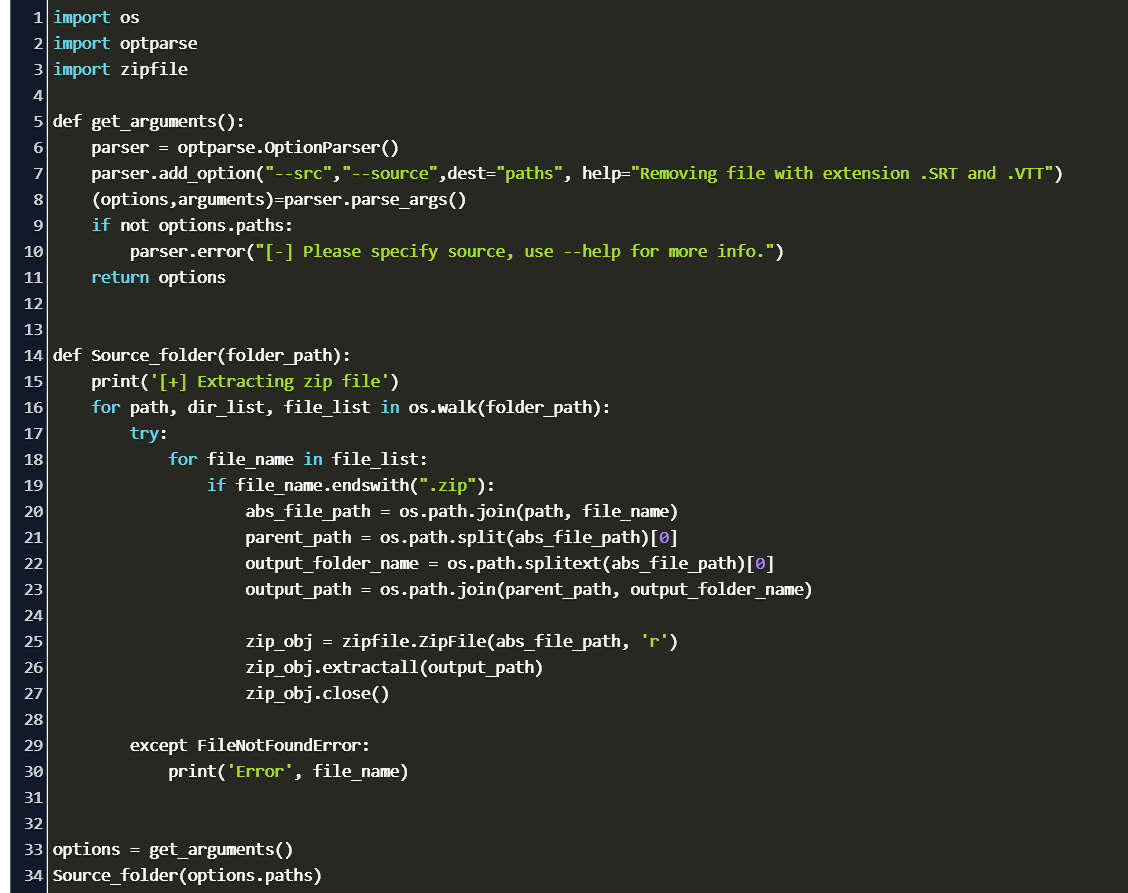

The following variant of multi-threaded downloads with throttling is implemented. Much better: throttling threadsĪ safe but efficient option consists in throttling requests based on domains/websites from which content is downloaded. result ())Īsynchronous processing in probably even more efficient in the context of file downloads from a variety of websites. The web crawling and scraping framework used below for the examples can be easily installed with pip:įrom concurrent.futures import ThreadPoolExecutor, as_completed from trafilatura import fetch_url # buffer list of URLs bufferlist = # download pool: 4 threads with ThreadPoolExecutor ( max_workers = 4 ) as executor : future_to_url = for future in as_completed ( future_to_url ): # do something here: url = future_to_url print ( url, future.

PYTHON DOWNLOAD FROM URL HOW TO

see how to perform downloads sequentially and in parallel, while having essential politeness rules taken care of. In the following, we will see how to perform downloads a fairly simple solution to the problem.

To keep an eye on all these constraints as once, the best option is to use an open-source framework you can trust or take under scrutiny. This additional constraint means we have to not only care for download speed but also manage a register of known websites and apply the rules so as to keep maximizing speed while not being too intrusive. We will see below what these mechanisms are and how to take them into account. Mechanisms exist for public sites not wishing to be crawled to make this known to the crawling agent. That is why issues of schedule, load, and politeness come into play. Machines consume resources on the visited systems and they often visit sites unprompted. In addition, both single and concurrent downloads should respect basic “politeness” rules. for example when available cores are not used to their full capacity. On the contrary, parallel computing can lead to performance problems. Massive downloads can be a burden for the network, the target servers or one’s own computers. However, a number of issues arise when one gets to the details of the implementation. In order to retrieve multiples web pages at once it makes sense to retrieve as many domains as possible in parallel.

PYTHON DOWNLOAD FROM URL SERIES

As such, optimizing this phase is crucial for anyone wishing to gather data from a series of websites. This part is indeed highly relevant as transmitting data over the network is very often slower than further data processing performed locally. A previous blog post addresses practical ways to perform URL selection.Īnother way is to maximize throughput by working on download speed and bandwidth capacity. One way to reach this goal is to filter the links that are to be fetched in order to maximize their adequacy to the data collection project, for example by selecting links corresponding to a series of target domains, to a target language, a topic, etc. Problem description Efficient web data collectionĪ main objective of data collection over the Internet such as web crawling is to efficiently gather as many useful web pages as possible.

Here is a simple way keep an eye on all these constraints as once. However, one should respect “politeness” rules. Optimizing downloads is crucial to gather data from a series of websites.

PYTHON DOWNLOAD FROM URL CODE

Date Fri 05 November 2021 Category Tutorial Tags code snippet